This notebook contains an excerpt from the Python Programming and Numerical Methods - A Guide for Engineers and Scientists, the content is also available at Berkeley Python Numerical Methods.

The copyright of the book belongs to Elsevier. We also have this interactive book online for a better learning experience. The code is released under the MIT license. If you find this content useful, please consider supporting the work on Elsevier or Amazon!

< 17.4 Lagrange Polynomial Interpolation | Contents | 17.6 Summary and Problems >

Newton’s Polynomial Interpolation¶

Newton’s polynomial interpolation is another popular way to fit exactly for a set of data points. The general form of the an n−1 order Newton’s polynomial that goes through n points is:

which can be re-written as:

where $ni(x)=∏i−1j=0(x−xj)$

The special feature of the Newton’s polynomial is that the coefficients ai can be determined using a very simple mathematical procedure. For example, since the polynomial goes through each data points, therefore, for a data points (xi,yi), we will have f(xi)=yi, thus we have

And f(x1)=a0+a1(x1−x0)=y1, by rearranging it to get a1, we will have:

Now, insert data points (x2,y2), we can calculate a2, and it is in the form:

Let’s do one more data points (x3,y3) to calculate a3, after insert the data point into the equation, we get:

Now, see the patterns? These are called divided differences, if we define:

We continue write this out, we will have the following iteration equation:

We can see one beauty of the method is that, once the coefficients are determined, adding new data points won’t change the calculated ones, we only need to calculate higher differences continues in the same manner. The whole procedure for finding these coefficients can be summarized into a divided differences table. Let’s see an example using 5 data points:

Each element in the table can be calculated using the two previous elements (to the left). In reality, we can calculate each element and store them into a diagonal matrix, that is the coefficients matrix can be write as:

Note that, the first row in the matrix is actually all the coefficients that we need, i.e. a0,a1,a2,a3,a4. Let’s see an example how we can do it.

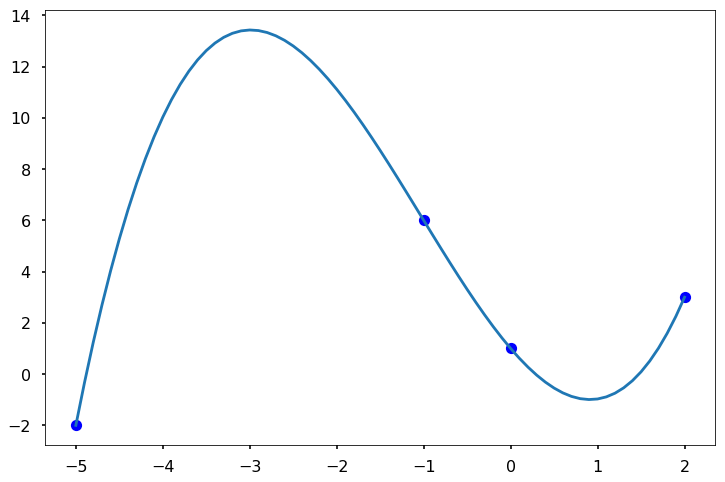

TRY IT! Calculate the divided differences table for x = [-5, -1, 0, 2], y = [-2, 6, 1, 3].

def divided_diff(x, y):

'''

function to calculate the divided

differences table

'''

n = len(y)

coef = np.zeros([n, n])

# the first column is y

coef[:,0] = y

for j in range(1,n):

for i in range(n-j):

coef[i][j] = \

(coef[i+1][j-1] - coef[i][j-1]) / (x[i+j]-x[i])

return coef

def newton_poly(coef, x_data, x):

'''

evaluate the newton polynomial

at x

'''

n = len(x_data) - 1

p = coef[n]

for k in range(1,n+1):

p = coef[n-k] + (x -x_data[n-k])*p

return p

We can see that the Newton’s polynomial goes through all the data points and fit the data.

< 17.4 Lagrange Polynomial Interpolation | Contents | 17.6 Summary and Problems >